Today, we crossed a major milestone.

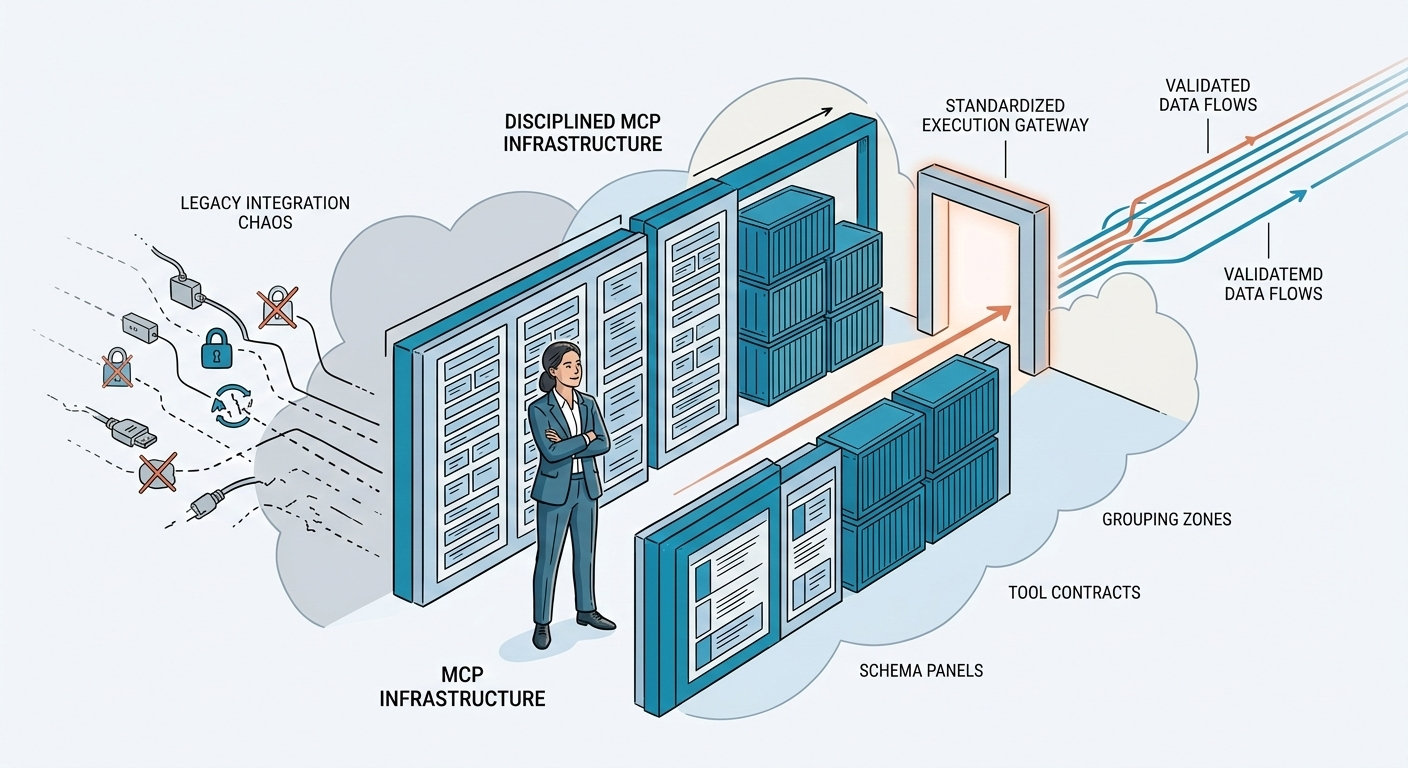

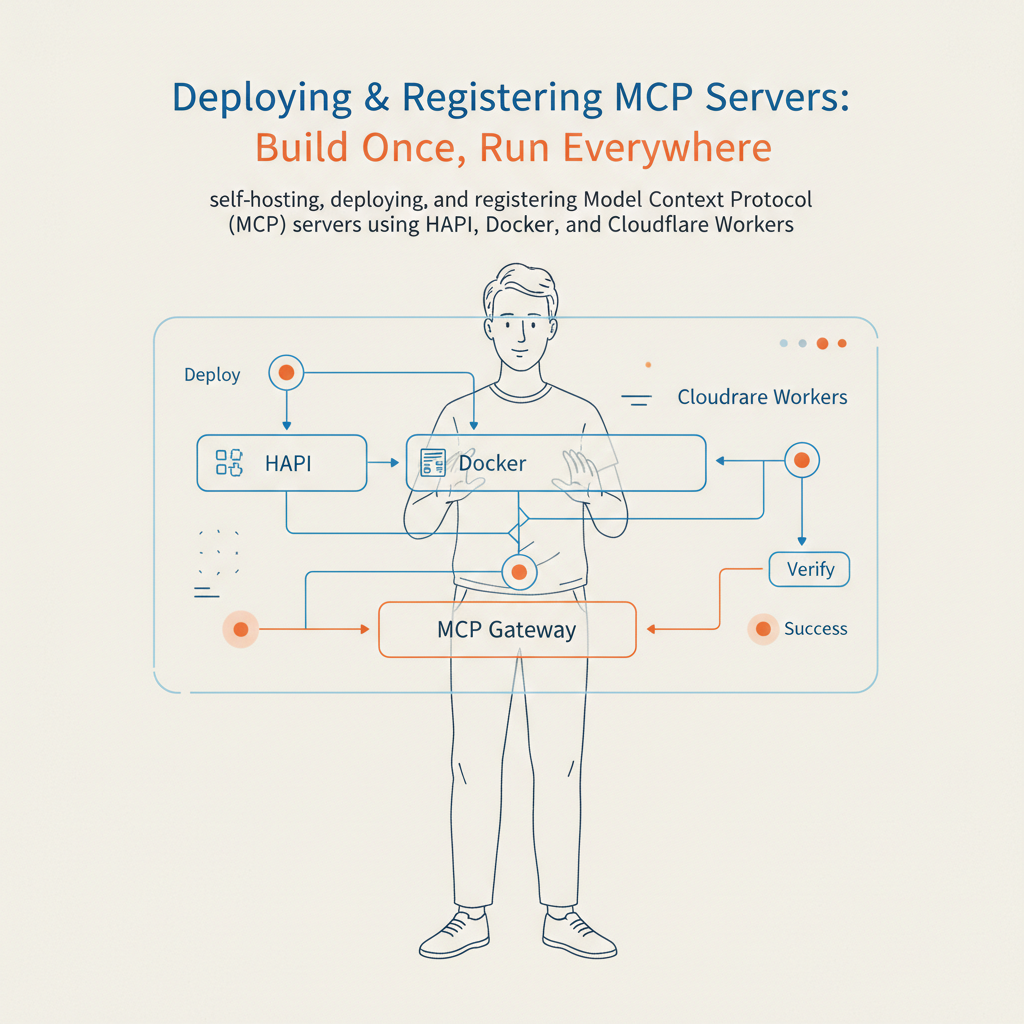

👉 The HAPI MCP Registry is now available in the ChatGPT marketplace, officially aligning with the ecosystem driven by OpenAI.

And this is bigger than a feature release.

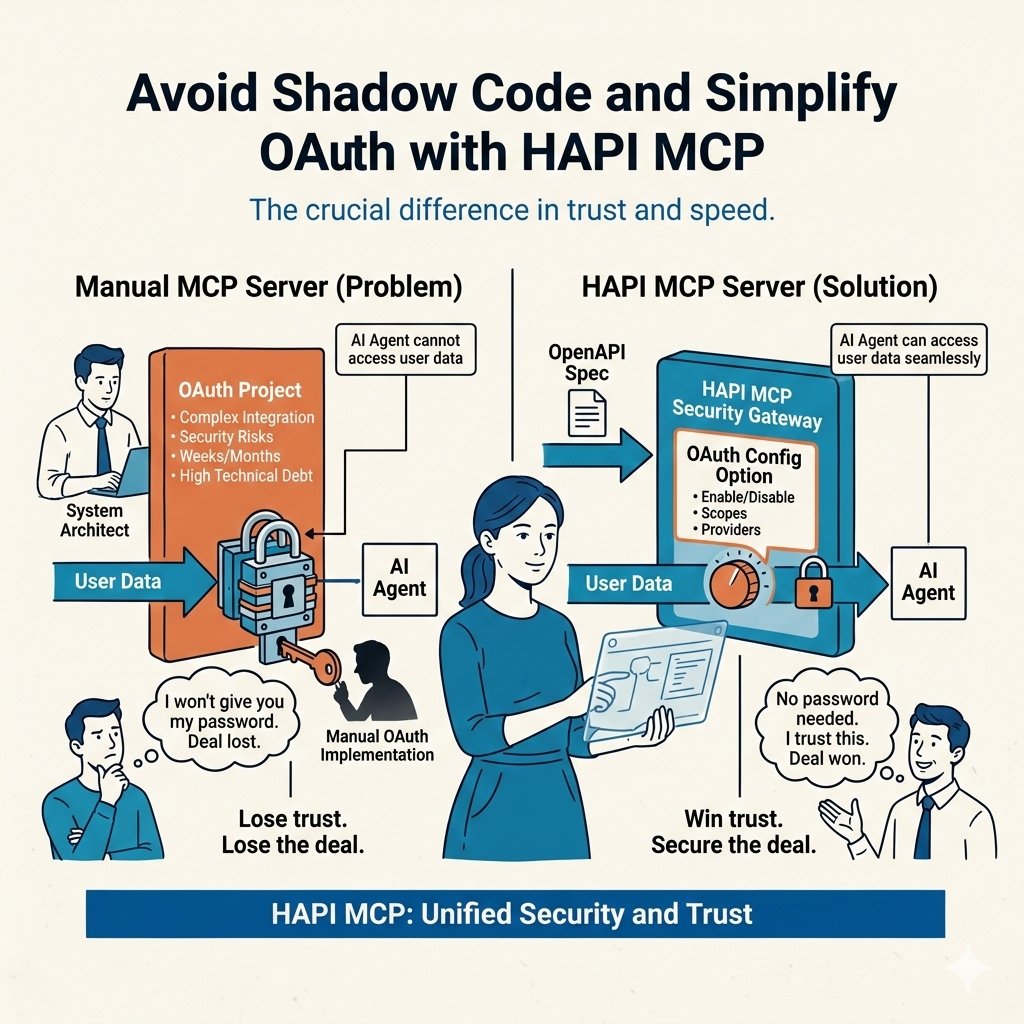

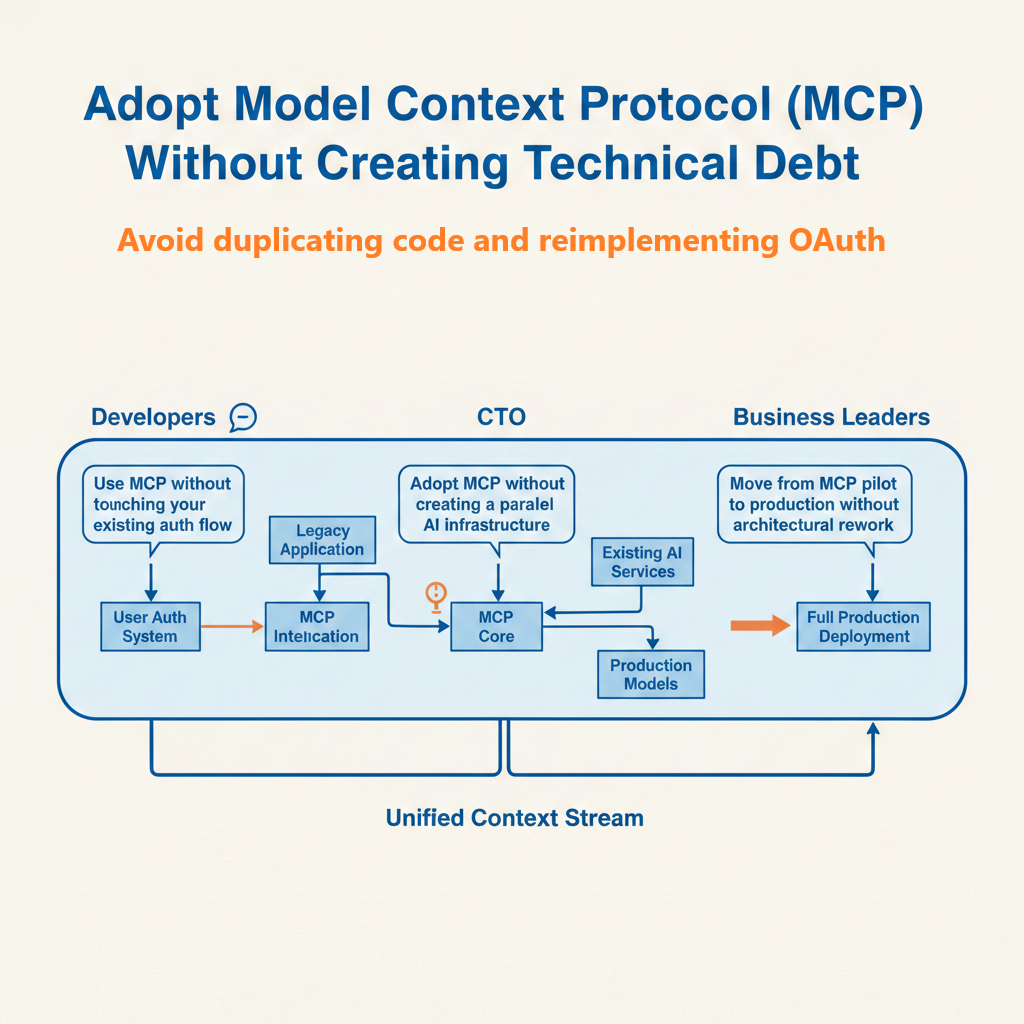

This is a shift in how APIs and AI finally connect.