AI Automation in Enterprises: The Shift from Experimentation to Operations

Enterprise AI is entering a new phase. Not the hype phase. Not the experimentation phase. The operational phase — where organizations must make AI safe, governed, and useful for real teams.

Over the last year, a clear pattern has emerged inside large enterprises experimenting with AI automation. What starts as scattered experimentation quickly evolves into a structured platform strategy.

The organizations that succeed are not the ones deploying the best model. They are the ones building the right architecture around the model.

And that architecture almost always follows the same evolution.

In many large organizations today, AI adoption is moving through three recognizable phases.

Phase 1 — AI Experimentation

This is the discovery phase.

Teams explore tools like:

- open-source LLM frameworks

- local model hosting

- developer AI assistants

- simple workflow builders

- prototype chat interfaces

The goal is simple:

Understand what AI can do.

Developers experiment. Innovation teams build proofs of concept. Some teams automate small tasks.

At this stage, the environment looks chaotic — many tools, many ideas, little governance.

But experimentation is necessary.

It helps organizations learn where AI creates real value.

Phase 2 — Governance and Platform Infrastructure

Once experimentation shows value, leadership begins asking harder questions.

Questions like:

- Where are these models running?

- Who has access to sensitive data?

- How do we audit AI decisions?

- How do we prevent security risks?

- How do we ensure regulatory compliance?

This is when enterprise AI platforms emerge.

Instead of scattered experiments, organizations build structured environments that include:

- model infrastructure

- access monitoring

- governance controls

- compliance frameworks

- internal tooling

The focus shifts from experimentation to control and stability.

This is also where concepts like AI sovereignty become central.

Phase 3 — Real Automation and Integration

This is where things get interesting. With a governed platform in place, organizations can finally focus on making AI useful for real teams.

The focus shifts from:

"What can the model answer?"

to

"What can AI actually do inside the business?"

This is where integration architecture becomes critical. AI is only useful when it can interact with internal systems — APIs, databases, analytics platforms, CRMs, ticketing tools, and more.

That means:

• automating workflows • querying internal systems • generating operational reports • coordinating actions across tools

And this reveals the real bottleneck in enterprise AI adoption: integration.

AI Sovereignty, Strategic Priority

For regulated industries — telecom, finance, healthcare, government — AI cannot operate as a black box.

Organizations need control over:

Compute Sovereignty

Models must run on trusted infrastructure.

Sometimes that means private cloud. Sometimes internal clusters. Sometimes controlled hybrid environments.

Data Sovereignty

Sensitive information must remain within enterprise boundaries.

This includes:

- customer data

- operational systems

- internal documentation

- proprietary analytics

Software Sovereignty

Enterprises cannot depend entirely on a single vendor ecosystem.

They need modular architectures that allow them to:

- switch providers

- replace components

- maintain long-term platform control

Operational Sovereignty

Every AI action must be:

- auditable

- observable

- governed

Without these capabilities, large organizations simply cannot deploy AI safely.

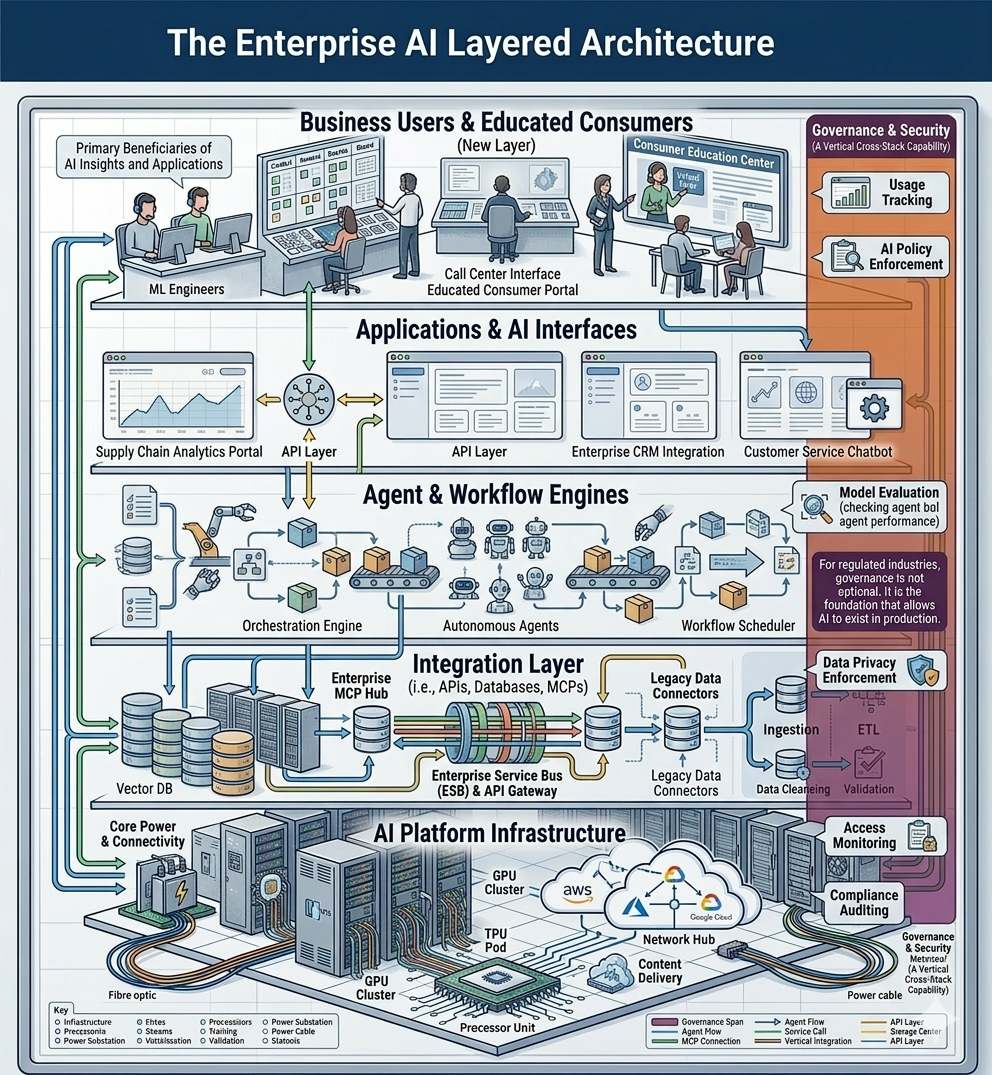

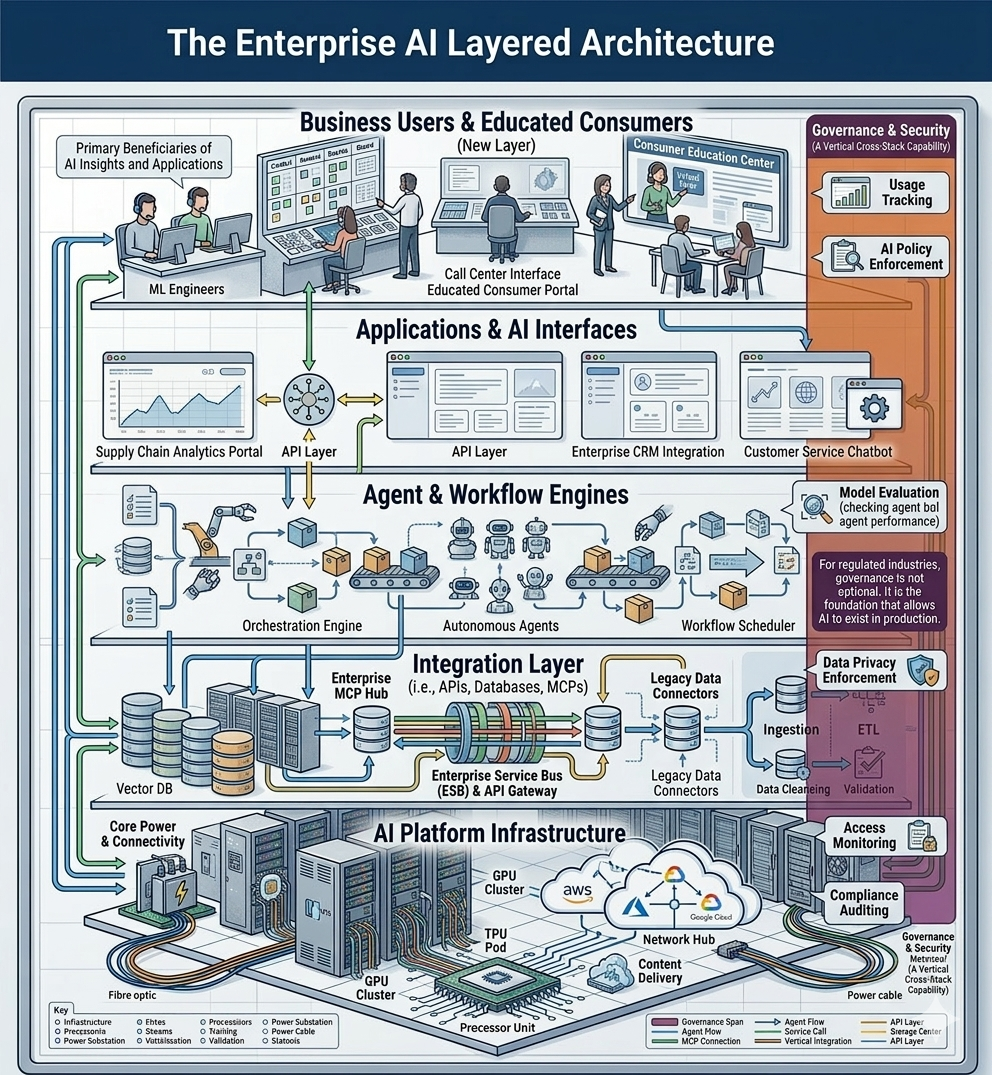

The Real Enterprise AI Architecture

Many people imagine enterprise AI as a single platform.

The reality is very different.

Enterprise AI typically operates as a layered architecture.

A typical enterprise AI stack looks something like this.

Layer 1 — Business Users and Educated Consumers

At the top of the stack are the people who actually need automation.

Some are not AI engineers, but they are the ones who will benefit most from AI-driven automation, and must educate themselves on how to use it effectively.

They are:

- operations teams

- product managers

- analysts

- support teams

- internal platform users

Their needs are practical.

They want to:

- automate repetitive work

- query internal systems

- generate reports

- coordinate workflows

- retrieve operational data

Most of them prefer natural language interactions rather than APIs.

Layer 2 — Applications and AI Interfaces

Users interact with AI through various interfaces.

Examples include:

- enterprise chat assistants

- internal AI portals

- conversational automation tools

- developer AI environments

These interfaces translate human requests into structured actions.

But by themselves, they cannot automate anything.

They still need systems behind them.

Layer 3 — Agent and Workflow Engines

This layer orchestrates automation.

Typical responsibilities include:

- coordinating multiple AI steps

- executing workflows

- managing task dependencies

- invoking tools

- connecting actions together

Examples of capabilities in this layer include:

- workflow builders

- automation orchestration

- agent coordination

- task routing

This layer turns a single AI response into a sequence of actions.

Layer 4 — Integration Layer

This is where the real value appears.

AI becomes powerful only when it can interact with enterprise systems.

Examples include:

- internal APIs

- operational platforms

- databases

- analytics services

- CRM systems

- ticketing tools

Without integration, AI remains just a chatbot.

With integration, it becomes an operational automation engine.

This is where standardized integration protocols become extremely important.

Instead of every team writing custom AI integrations, organizations benefit from exposing internal capabilities through standardized interfaces that agents can discover and use safely.

Protocols designed for AI-native integrations are emerging as a key component of this architecture.

Layer 5 — AI Platform Infrastructure

Below the integration layer sits the model infrastructure.

This includes:

- hosted models

- internal LLM clusters

- inference services

- evaluation pipelines

These platforms provide the raw AI capabilities.

But by themselves they do not solve business problems.

They simply power the higher layers.

Governance and Security — A Cross-Cutting Vertical

Governance is not a separate layer. It is a vertical capability that spans the entire stack.

This includes:

- access monitoring

- model evaluation

- data privacy enforcement

- compliance auditing

- usage tracking

- AI policy enforcement

For regulated industries, governance is not optional.

It is the foundation that allows AI to exist in production.

Making AI Useful for Non-Technical Teams

The technology itself is rarely the biggest obstacle.

The real challenge is usability., making AI useful for non-technical teams.

Enterprise AI systems must serve people who are not developers.

Typical users include:

- business analysts

- operations managers

- product teams

- support teams

- strategy groups

These users often ask for things like:

- "Pull customer metrics from three systems and generate a weekly report."

- "Find all tickets related to this issue and summarize root causes."

- "Create a workflow to notify teams when system health drops."

They do not want to write code.

They want AI to interact with enterprise systems safely on their behalf.

This is where agent-based workflows combined with standardized integrations become powerful.

Why Integration Becomes the Bottleneck

In almost every enterprise AI deployment, the same pattern emerges.

The hardest problem is not the model.

It is connecting AI to internal systems safely.

Organizations quickly discover challenges like:

- inconsistent APIs

- authentication complexity

- governance restrictions

- data access limitations

- fragmented internal tooling

Each integration becomes a mini-project.

This slows down adoption dramatically.

To scale AI automation, enterprises need repeatable integration patterns.

The Rise of AI-Native Integration Protocols

To address this problem, a new category of integration protocols is emerging.

These protocols are designed specifically for AI agents interacting with enterprise systems.

Instead of building custom integrations for every tool, organizations expose capabilities through standardized interfaces that agents can:

- discover automatically

- understand programmatically

- invoke safely

This approach creates several advantages.

Discoverability

Agents can inspect available capabilities dynamically.

Standardized schemas

Tools expose structured definitions of what they can do.

Reduced integration work

Developers implement once instead of building custom connectors repeatedly.

Safer automation

Governance controls can operate at the protocol level.

These characteristics make integration layers far more scalable.

In practice, many organizations are starting to implement architectures where AI agents interact with internal systems through standardized tool interfaces exposed via integration servers.

This pattern simplifies automation while maintaining enterprise security requirements.

The Next Evolution: Agent Ecosystems

As enterprise AI platforms mature, another pattern is becoming clear.

AI systems are evolving from chat interfaces into agent ecosystems.

Instead of a single assistant answering questions, organizations are deploying networks of agents that can:

- execute workflows

- retrieve information

- coordinate systems

- trigger automations

- generate insights

These agents rely heavily on integration layers that expose enterprise capabilities in a consistent way.

Without this layer, agents cannot operate effectively.

With it, organizations unlock entirely new automation capabilities.

Practical Guidelines for Enterprise AI Adoption

Based on what many organizations are experiencing today, several guidelines are emerging.

1. Governance must come first

AI cannot scale without security and compliance frameworks.

Governance must be built into the architecture from the beginning.

2. Open experimentation accelerates learning

Early experimentation with open tools helps teams understand real use cases quickly.

Controlled environments allow safe exploration.

3. Integration architecture determines success

AI becomes useful only when it connects to enterprise systems.

Organizations should design integration layers intentionally.

4. Modular architectures outperform monolithic platforms

Successful AI environments combine multiple specialized tools rather than relying on a single vendor solution.

5. Agent workflows unlock real automation

The future of enterprise AI is not chatbots.

It is AI-driven operational workflows.

The Quiet Cornerstone of Enterprise AI

If there is one architectural insight becoming increasingly clear, it is this:

The integration layer determines whether enterprise AI succeeds or fails.

Without structured integration patterns, AI systems remain isolated assistants.

With the right integration architecture, they become automation engines capable of transforming enterprise operations.

Protocols designed for AI-native integrations are rapidly becoming the cornerstone of this layer.

They provide a standardized way to expose enterprise systems to AI agents safely, enabling scalable automation without rewriting integrations repeatedly.

The Path Forward

Over the next few years, enterprise AI platforms will likely converge toward a common architecture:

- governed model infrastructure

- modular orchestration layers

- AI-native integration protocols

- agent-driven workflows

- business-accessible automation interfaces

The organizations that embrace this architecture early will move faster.

Not because they have better models.

But because they have built the platform that allows AI to operate safely across the enterprise.

And once that platform exists, automation stops being an experiment.

It becomes a new operating model for the business.

FAQ: Enterprise AI automation and architecture

Q: What is enterprise AI automation?

A: Enterprise AI automation is the use of AI systems, agents, and workflows to execute business tasks across internal tools, data sources, and operational systems in a governed and secure way.

Q: Why are enterprises moving from AI experimentation to AI operations?

A: Once early pilots show value, organizations need security, compliance, observability, and repeatable delivery. The focus shifts from trying AI tools to building a governed operating platform.

Q: What does a typical enterprise AI architecture look like?

A: It usually includes business users, AI interfaces, agent and workflow engines, an integration layer connected to enterprise systems, and AI platform infrastructure. Governance and security span all of these as a cross-cutting vertical.

Q: Why is governance not optional in enterprise AI?

A: Enterprise AI must be auditable, observable, privacy-aware, and compliant before it can be trusted in production, especially in regulated industries such as finance, healthcare, telecom, and government.

Q: What is AI sovereignty in enterprise AI?

A: AI sovereignty means retaining control over compute, data, software choices, and operations so the enterprise can run AI in a trusted, governable, and vendor-resilient way.

Q: Why does the integration layer determine enterprise AI success?

A: AI creates business value only when it can safely interact with internal systems. Without integration, it remains a chatbot. With integration, it becomes an operational automation engine.

Q: What is the biggest challenge in enterprise AI adoption?

A: In most organizations, the biggest challenge is not the model. It is making AI useful for non-technical teams while connecting it safely to enterprise systems through repeatable integration patterns.

Q: What are AI-native integration protocols?

A: They are standardized ways for AI agents to discover, understand, and invoke enterprise capabilities safely, reducing custom integration work and making automation more scalable.

Q: What is the future of enterprise AI platforms?

A: The architecture is converging toward governed model infrastructure, modular orchestration, AI-native integration protocols, agent-driven workflows, and business-accessible automation interfaces.