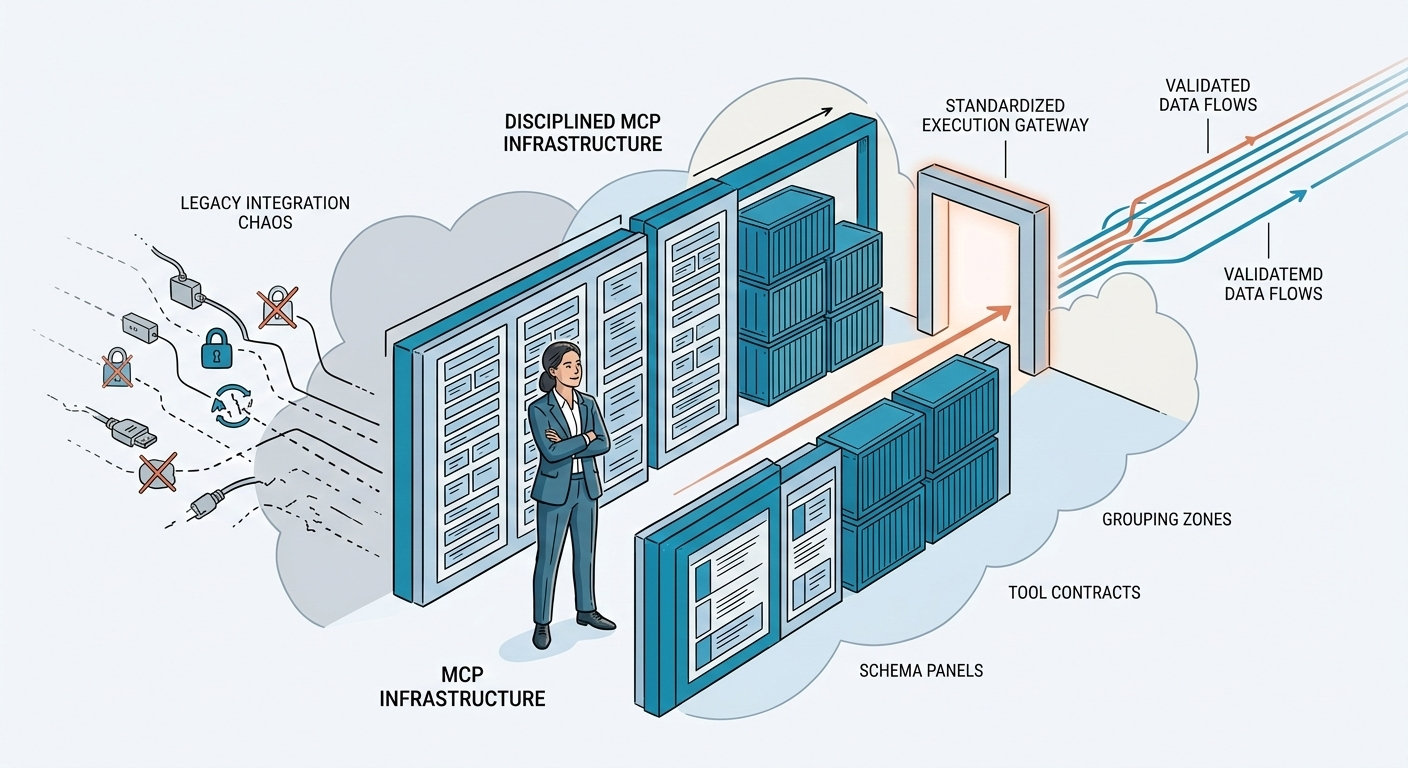

The Hidden Scaling Problem in MCP — And How to Fix It

If every AI agent needs its own custom integration... you don't have an AI strategy. You have an integration nightmare.

Traditional APIs were built for humans and frontends. AI agents change the equation.

And this is where most teams misunderstand Model Context Protocol (MCP).

They think MCP solves integration complexity automatically.

It doesn't.

It standardizes discovery and interaction — but implementation discipline still matters.

Let's break this down clearly.

Traditional APIs vs MCP: What Actually Changes?

How do traditional APIs work?

Traditional REST APIs require every client to:

- Read documentation

- Implement authentication flows

- Format requests correctly

- Handle errors manually

- Track version changes

- Maintain compatibility

If you have:

- 1 API

- 5 clients

- 3 authentication methods

- 2 versions

You don't have 1 integration problem.

You have 30.

Now replace "clients" with:

- ChatGPT connectors

- Claude Desktop

- Internal agents

- Workflow bots

- Edge agents

- Telco network AI

- Enterprise copilots

That model does not scale.

What Is Model Context Protocol (MCP)?

MCP changes the integration contract.

Instead of hardcoded REST calls, it provides:

- Runtime tool discovery

- Schema introspection

- Agent-native communication

- Structured inputs and outputs

- Standard interaction patterns

Well, at least that's the promise, and what HAPI MCP delivers - without the fragmentation, without coding, and with enterprise-grade discipline.

Any MCP-compatible agent can:

- Connect to a remote MCP endpoint

- Inspect available tools

- Understand their schema

- Execute them without custom coding

This is plug-and-play for agents.

But here's the part most people miss.

The Hidden Problem: MCP Server Fragmentation

MCP standardizes how agents talk to servers.

It does not standardize how servers are built internally.

Two teams can implement an MCP server for the same API and end up with:

- Different auth logic

- Different error handling

- Different retry policies

- Different token exchange flows

- Different schema interpretations

- Duplicated backend logic

- Security inconsistencies

Now you have a new problem:

MCP interface standardization + backend chaos.

This is where architecture discipline matters.

MCP servers are not just wrappers.

They are distributed system components.

Why MCP Servers Must Be Treated as Distributed Systems

An MCP Server:

- Runs independently

- Calls upstream APIs

- Handles authentication delegation

- Translates schemas

- Applies business logic

- Returns structured responses

That is a system.

Not a simple adapter.

If you let each team build MCP servers differently:

- Security posture varies

- OAuth flows break inconsistently

- Error semantics change

- Agent behavior becomes unpredictable

- Maintenance cost explodes

In enterprise environments, this is unacceptable.

This is exactly why HAPI MCP exists.

What is the best way to fix this problem?

HAPI MCP solves the missing layer.

It standardizes the server implementation engine, not just the protocol interface.

Instead of:

"Every team builds its own MCP server logic"

You get:

"Deploy MCP servers from OpenAPI specs — the engine handles the rest."

Let's analyze why that matters.

1️⃣ Authentication Is Centralized, Not Re-Invented

One of the hardest problems in MCP ecosystems is OAuth.

Especially 3-legged flows:

- User → Client (ChatGPT, Claude)

- Authorization Server (LinkedIn, Strava, etc.)

- Resource Server

Without discipline, each MCP server re-implements:

- PKCE

- Token exchange

- Bearer forwarding

- Refresh token handling

- Error propagation

This is standard, HAPI MCP provides a secure, consistent implementation for all servers.

Authentication is:

- Consistent

- Repeatable

- Delegated properly

- Secure by design

You don't rewrite OAuth per server.

You deploy.

2️⃣ Error Handling Becomes Deterministic

Agents rely on predictable behavior.

If one MCP server returns:

{ "error": "not_found" }

And another returns:

{ "message": "Missing resource" }

Your agent logic breaks.

HAPI MCP ensures:

- Consistent error semantics

- Standardized response shapes

- Uniform status mapping

This reduces agent hallucination and workflow failures.

3️⃣ No Business Logic Duplication

One of the silent architectural sins:

Teams duplicating logic inside MCP servers that already exists upstream.

For example:

- Re-validating data unnecessarily

- Re-implementing filtering

- Duplicating rate limiting

- Recreating transformation layers

HAPI MCP treats the server as:

- A standardized orchestration layer

- Not a logic duplication layer

It respects upstream authority.

This keeps systems clean.

4️⃣ Contract Changes Don't Break Everything

Traditional APIs break clients when contracts change.

MCP allows runtime introspection.

But if your server implementation is inconsistent:

You still break behavior.

HAPI MCP enforces:

- Schema alignment with OpenAPI

- Predictable tool exposure

- Controlled updates

- Versioning discipline

This is critical for enterprise.

5️⃣ Enterprise Governance Is Built-In

CIOs and platform teams ask:

- Who deployed this MCP server?

- Which auth flow is used?

- Where are tokens stored?

- How is auditing done?

- Can we run this on-prem?

- What about airgapped environments?

HAPI MCP answers:

- Deployable on-prem or cloud

- Same engine everywhere

- Standard behavior

- Governance-ready

- Compatible with regulated environments

This is not "just another wrapper tool."

This is infrastructure.

Business Impact: Why This Matters

Let's translate technically into business language.

Without Standardized MCP Servers

- Higher integration costs

- Inconsistent AI results

- Security risk

- Maintenance overhead

- Vendor lock chaos

- Harder audits

With HAPI MCP

- Faster AI rollout

- Lower integration cost

- Predictable agent behavior

- Enterprise-ready governance

- Reduced duplication

- AI strategy that scales

Time saved = money saved Consistency = risk reduction Standardization = long-term leverage

Frequently Asked Questions

What problem does MCP solve?

MCP standardizes how AI agents discover and interact with tools at runtime, removing hardcoded integrations.

Is MCP enough by itself?

No. MCP defines the interaction protocol, but it does not standardize server implementation discipline.

Why do MCP implementations vary?

Because teams interpret authentication, error handling, and schema translation differently unless an engine enforces consistency.

How does HAPI MCP improve MCP servers?

HAPI MCP provides a standardized execution engine that ensures:

- Unified authentication handling

- Predictable error semantics for all servers

- Schema consistency

- Enterprise-grade governance

Can HAPI MCP run on-premise?

Yes. It supports cloud and on-prem deployments, including regulated and partially disconnected environments.

Does HAPI duplicate backend logic?

No. It acts as a standardized orchestration layer and respects upstream APIs as the source of truth.

Strategic Recommendation: Treat MCP as Infrastructure

If you are building:

- Enterprise AI copilots

- Telco network APIs

- Platform team AI integrations

- Agent ecosystems

- Multi-provider connectors

You cannot treat MCP servers as lightweight wrappers.

You must treat them as:

- Standardized infrastructure components

- Governed distributed systems

- Secure orchestration layers

That is the HAPI MCP philosophy.

Final Thought

MCP is the future of agent-native integration.

But:

MCP without implementation discipline becomes fragmentation.

HAPI MCP is not about replacing the protocol.

It is about making it reliable.

If your goal is:

- AI at scale

- Enterprise governance

- Predictable agent behavior

- No duplicated backend chaos

Then the path is clear.

Standardize the engine.

Deploy with confidence.

Build once.

Scale everywhere.

That's the HAPI MCP way.