AI Cost Optimization: Lessons from Database Applied

"We already solved this problem... and somehow we forgot."

Back in the day, database engineers learned this lesson the hard way.

We didn't call it "AI cost optimization." We called it bad query design.

And it hurt.

The déjà vu: from SQL disasters to AI chatbots

I was reading a LinkedIn post about a chatbot that cost $3K to build... and $28K/month to run.

And instantly, I had flashbacks.

To teams building dynamic SQL queries like this:

SELECT * FROM users WHERE name = 'Adrian'

But instead of using parameters, they would literally inject values into strings:

"SELECT * FROM users WHERE name = '" + userInput + "'"

At first, everything worked.

Then scale happened.

And everything broke.

What went wrong back then (and now)

1. Every query looked "new" to the database

Databases rely on query plan caching.

When you use prepared statements, the database sees:

SELECT * FROM users WHERE name = ?

It builds a plan once → reuses it forever.

But with dynamic queries?

Every variation is a brand new query:

SELECT * FROM users WHERE name = 'John'

SELECT * FROM users WHERE name = 'Jane'

SELECT * FROM users WHERE name = 'Mike'

➡️ The database can't reuse the plan efficiently

➡️ It recompiles again and again

➡️ Performance drops hard

2. Complexity kills performance silently

The worse part?

It doesn't fail immediately.

It degrades slowly:

- CPU spikes

- Memory pressure increases

- Latency creeps up

- Costs rise quietly

Until one day:

"Why is everything so slow?"

3. Scale exposes the flaw

At small scale:

"It works fine."

At real scale:

"This is burning money."

Now look at the chatbot story again

Same pattern. Different technology.

Let's translate:

| Old SQL World | AI Chatbot World |

|---|---|

| Dynamic queries | Every prompt treated as new |

| No prepared statements | No semantic caching |

| No query reuse | No deduplication |

| Recompile every time | Call expensive model every time |

| CPU/memory waste | Token cost explosion |

The real problem: treating every request as unique

In the LinkedIn example:

80% of queries were just variations of the same 12 questions

But the system treated them like:

100% brand new problems.

That's the mistake.

Not the model. Not the infra. The design.

The principle we forgot

Systems should recognize patterns. Not ignore them.

Databases solved this years ago:

- Prepared statements

- Query plan caching

- Indexing strategies

But in AI systems?

We're back to:

"Let's just send everything to the model."

Unfortunately, that approach is a recipe for:

- High costs

- Unpredictable performance

- Scaling nightmares

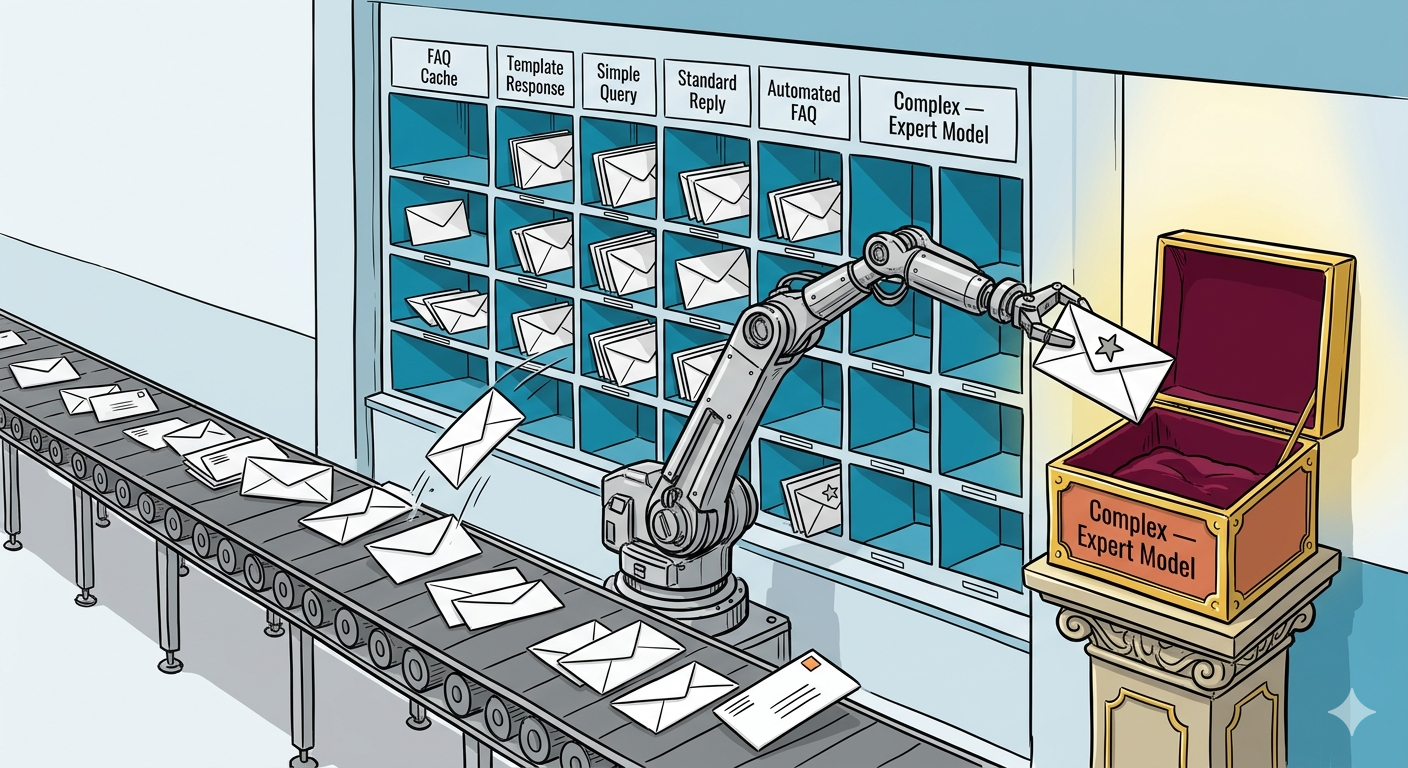

The modern "prepared statements" for AI

The fix they implemented is basically database engineering... applied to AI.

1. Semantic cache (query plan cache equivalent)

Instead of exact matches:

- Use embeddings

- Detect "close enough" questions

- Return cached answers

➡️ Same intent → same response

➡️ Zero model cost

2. Response templates (precomputed queries)

For high-frequency questions:

- Predefine structured responses

- Skip the model entirely

➡️ Instant responses

➡️ Predictable cost

3. Model routing (query optimizer)

Not all queries deserve the same treatment:

- Simple → cheap model or cache

- Complex → expensive model

➡️ Just like a query optimizer choosing execution paths

Business impact (this is what matters)

Let's translate this to what leaders actually care about:

- 75% cost reduction ($28K → $7K/month)

- Faster response times (cache hits = instant)

- Better scalability (predictable cost curve)

- Lower operational risk (no runaway bills)

The dangerous illusion: "cheap AI build"

Here's the uncomfortable truth:

The build cost is a demo metric The run cost is a business metric

And most teams optimize for the wrong one. If you focus on building something quickly without considering the long-term costs, you might end up with a system that's expensive to operate. This is especially true in AI, where every model call can incur significant costs.

Why this keeps happening

Because AI development today feels like:

- Early web development

- Early microservices

- Early cloud adoption

Everyone is focused on:

"Can we make it work?"

Instead of:

"Can we make it scale?"

History repeating itself

We've seen this before:

- Monoliths → microservices chaos

- Cloud → bill shock

- APIs → integration sprawl

Now:

AI → token burn without control

The shift: from "AI features" to "AI systems"

If you're building AI in production, this is the mindset shift:

❌ Old thinking

- Every request is unique

- Let the model handle it

- Optimize later

✅ New thinking

- Most requests are patterns

- Design for reuse first

- Control cost at the architecture level

Where this connects to MCP

This is exactly where MCP + API-first design changes the game.

Instead of:

Unstructured prompts → expensive model calls

You move toward:

- Structured tools

- Deterministic workflows

- Reusable operations

➡️ The system becomes predictable

➡️ Costs become controllable

➡️ Behavior becomes auditable

The takeaway (don't ignore this)

If your AI system treats every request as new... your bill will grow faster than your users.

And the worst part?

You won't notice until it's already expensive.

Final thought

We didn't fix dynamic SQL because it was "cool."

We fixed it because:

It was inefficient, unsafe, and impossible to scale.

AI systems are hitting that exact same moment.

The teams that win won't be the ones with the smartest models.

They'll be the ones who remember:

Efficiency is architecture, not an afterthought.

How are you designing your AI system to recognize patterns instead of treating every request as unique?